Cinematography with Artificial Intelligence

How to add ‘motion’ to images with Stable Diffusion Video.

‘Stable Video Diffusion’ is a generative AI model developed by ‘Stability AI’. It can transform images into video’s, by adding ‘motion’ to ‘static elements’ in images. This makes it a great tool to create cinemagraphs:

“A cinemagraph is a combination of a still image and a video, where most of the scene is stationary, while a section moves on a continuous loop.”

Table of Contents:

Quick guide

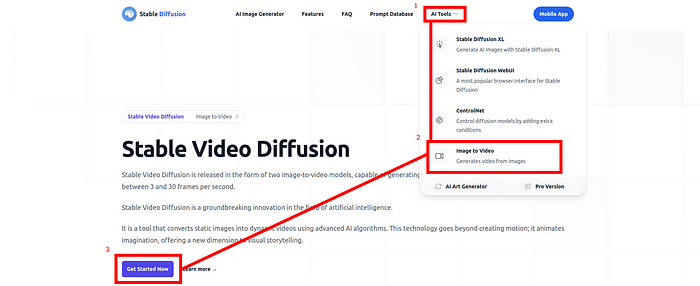

Visit Stable Video Diffusion’s Website

Click on ‘AI Tools’

Click on ‘Image to Video’

Click on ‘Get Started Now’

Enter a ‘prompt’

Adjust the ‘advanced options’

Click on ‘Generate’

Advanced options

Click on ‘advanced options’ to adjust specific settings: seed, motion bucket id, and frames per second (fps):

Seed: Initialize the random number generator that is used to generate the video. If you use the same seed value, but different ‘motion bucket id’ and ‘frames per second’ values, you can make slight adjustments to the video, while maintaining its ‘core’.

Motion bucket id: Control the motion of the generated video. Increasing the motion bucket id will increase the ‘movement’ in the generated video.

Frames per second: Control the frames per second of the generated video. The higher the fps, the ‘smoother’ the video will appear. A lower fps will make the video appear more ‘choppy’.

Example

I made a visual representation of a ‘super-intelligent AI system’, inspired by the fictional AI named ‘Wintermute’ from the novel ‘Neuromancer’ (1984), by William Gibson.

First, a ‘static’ image was created with Bing Image Creator:

Afterwards, Stable Video Diffusion was used to add ‘motion’ to the ‘artificial brain’ in the image. The goal was to make the brain ‘move’, to symbolize the AI - which the brain belongs to - ‘processing information’:

Result

It took several tries to figure out the ‘correct values’ for the seed, motion bucket id, and frames per second:

First, I had to find the ‘seed level’ which ‘connects’ to the part of the image which portrays the ‘brain’. Before I managed to do so, I received videos where a) eighter the ‘complete image’ was ‘moving’ or b) the ‘cables’ and ‘other hardware’ underneath the brain were ‘moving’.

After I discovered the ‘corect’ seed level, it was time to ‘play around’ with the ‘motion bucket ID’ and ‘Frames per second’ options.

Eventually I got exactly what I wanted; notice how the ‘brain’ is the ‘only’ ‘moving element’ in the image:

Tips and Tricks

Save time

Stable Video Diffusion can take some time to generate your image:

Instead of waiting to check if the generated image matches your expectations, you may want to open up multiple tabs of Stable Video Diffusion in your browser:

Use (slightly) different settings for the ‘advanced options’ in each tab, and ‘run’ the individual instances of Stable Diffusion alongside each other, to increase your ‘chance of succes’.

Video to GIF

Want to turn your video into a GIF? For example, to copy and paste the cinemagraph into a Substack post, like the article you are reading right now. There are many ‘online GIF makers’, such as EZ GIF:

More Guides on AI:

Visit AI-UPDATE’s GitHub profile for more guides on artificial intelligence.

![[video-to-gif output image] [video-to-gif output image]](https://substackcdn.com/image/fetch/w_1456,c_limit,f_auto,q_auto:good,fl_lossy/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fb5d70814-162d-4212-870e-5e0cb2a36840_600x338.gif)

![[video-to-gif output image] [video-to-gif output image]](https://substackcdn.com/image/fetch/w_1456,c_limit,f_auto,q_auto:good,fl_lossy/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fbd638122-ddcd-4c38-9c24-9679c1a9af33_600x203.gif)

![[video-to-gif output image] [video-to-gif output image]](https://substackcdn.com/image/fetch/w_1456,c_limit,f_auto,q_auto:good,fl_lossy/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F4c75fd8f-cb74-44c6-9f79-9a023cc180ae_566x28.gif)