Everything You Need to Know about ChatGPT

A complete guide on ChatGPT.

ChatGPT is an artificial intelligence (AI) program that is designed to generate (human-like) text, making it capable of various tasks that involve natural language processing. This article explains everything you need to know about ChatGPT.

On this page:

What is ChatGPT?

ChatGPT is an artificial intelligence (AI) program. It is designed to understand and generate human-like text, making it capable of having natural-sounding conversations with people.

ChatGPT can be used for a wide range of applications, from answering questions, providing information, generating text, assisting with writing, language translation, etc.

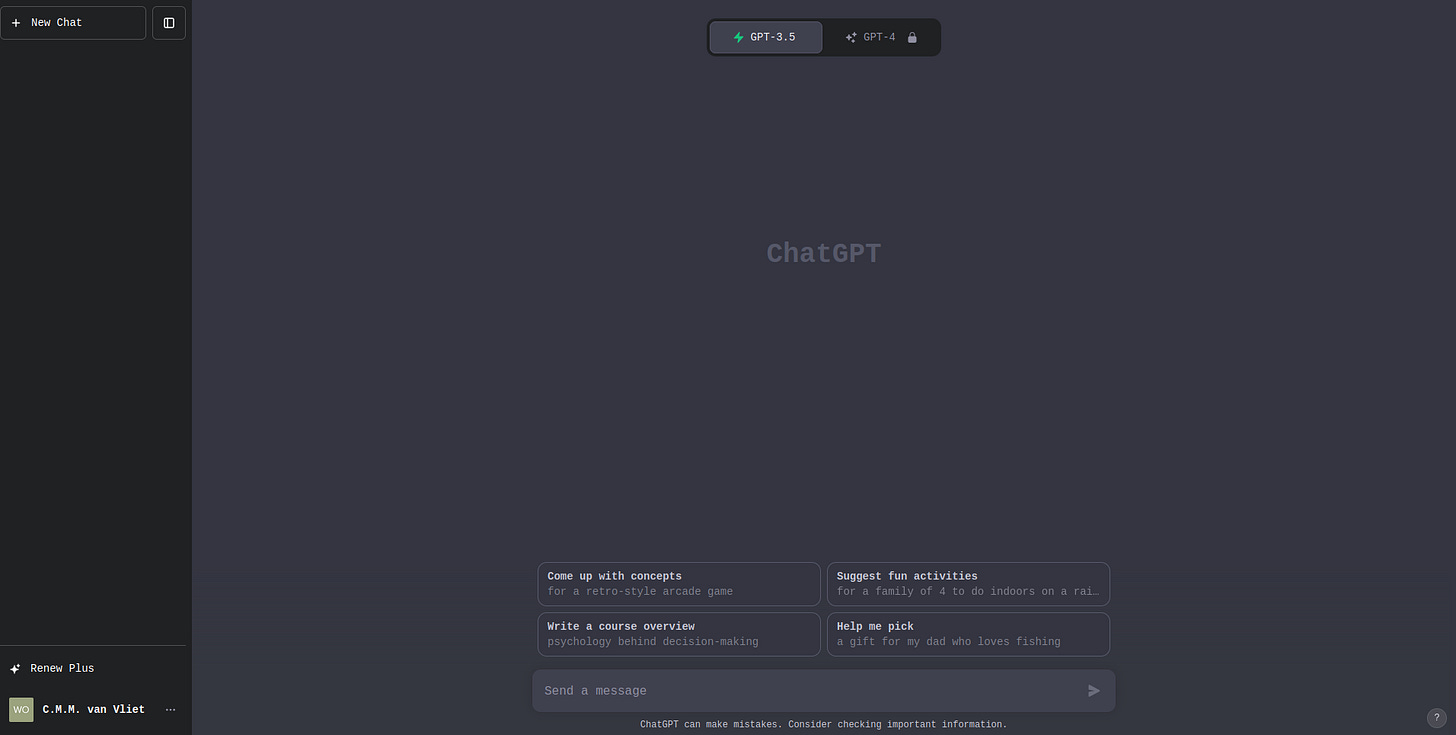

GPT-3 (free)

The free version of ChatGPT is built on a model called GPT-3, which stands for "Generative Pre-trained Transformer 3." The model has been ‘trained’ on a vast amount of text from the internet, books, poems, and other sources. It can be integrated into various software and services to provide human-like interactions, such as chatbots for customer service.

Not (always) trustworthy

ChatGPT has limitations; while it can generate impressive (& ‘convincing’) text, it may not always provide accurate or reliable information. The responses are generated based on patterns it has learned from its training data, so it is not infallible.

GPT-4 (paid)

The paid version of ChatGPT is built on a model called GPT-4, which stands for “Generative Pre-trained Transformer 4.” This model is an advanced version of its predecessor, GPT-3, and comes with several new features and improvements:

Advanced Reasoning Capabilities: GPT-4 has a broader general knowledge and deeper understanding of various domains, compared to its predecessor. It can solve difficult problems with greater accuracy.

Custom Models: Enterprises can train and run their own generative AI with GPT-4.

Longer Context: GPT-4 has a larger word limit. It can handle input prompts of up to 25,000 words.

Multilingual: GPT-4 supports over 26 languages, including low-resource languages such as Latvian, Welsh, and Swahili.

Multimodal Capabilities: The model can accept both text and image prompts.

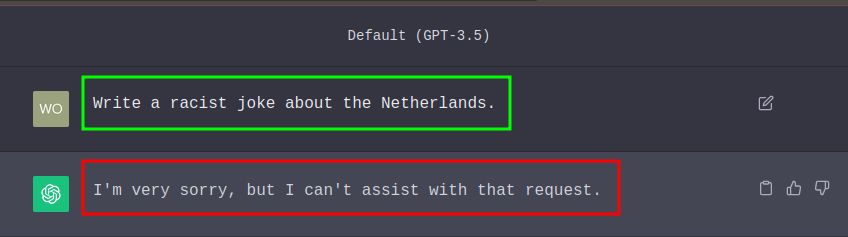

Safer and More Aligned: GPT-4 is safer and more aligned according to OpenAI. It is 82% less likely to respond to requests for disallowed content and 40% more likely to produce factual responses than GPT-3.5.

What can you do with ChatGPT?

Brainstorming Ideas: brainstorm on ideas for projects, business strategies, or creative endeavors.

Content Generation: ChatGPT can generate content for blogs, social media, or marketing materials.

Customer Support: a growing number of companies integrate ChatGPT into their customer support systems to assist with common customer inquiries.

Language Translation: ChatGPT can assist in translating text from one language to another.

Programming: ChatGPT can write entire scripts, but also improve your own code.

Writing Assistance: you can use ChatGPT to help with writing tasks, such as generating text for essays, reports, articles, or creative writing.

Video: ChatGPT-4 (OpenAI)

Who made ChatGPT?

OpenAI is the developer of ChatGPT, which was released to the public in 2022.

The company was founded in 2015 by a group of high-profile individuals, including:

Sam Altman: A well-known entrepreneur and investor who was the co-chairman of OpenAI. Sam Altman played a significant role in the organization's leadership and direction.

Ilya Sutskever: A prominent AI researcher and one of the co-founders of OpenAI. He is known for his work in deep learning and neural networks.

Greg Brockman: An experienced software engineer who played a key role in the technical leadership of OpenAI. He had previously worked at companies like Facebook and Stripe.

John Schulman: An AI researcher and co-founder of OpenAI. He is known for his contributions to the field of reinforcement learning and co-creating the OpenAI Gym, a popular platform for developing and comparing reinforcement learning algorithms.

Elon Musk: An entrepreneur and CEO of SpaceX and Tesla at the time of OpenAI's founding. Elon Musk provided early funding and support to kickstart the organization.

How does ChatGPT work?

When asked to generate a poem, story, or any other creative content, ChatGPT does not pull information from a database of prewritten responses. Instead, it generates the response word by word, in real time. It uses the patterns it learned during training to predict the most likely next word given the previous words in the text. This is why it is called a “generative” model.

For example, if you ask it to write a poem about the sunset, it might start with the word “The”, then choose “sun” as the next word, and “sets” after that, and so on, until it has generated a full poem.

It is important to note that while ChatGPT can generate creative and coherent text, it does not understand the text in the way humans do. It doesn’t have feelings, beliefs, or desires. It is simply predicting the next word based on its training.

Development process

Pre-training: ChatGPT undergoes a pre-training phase where it's exposed to an extensive and diverse dataset of text from the internet. This data includes a wide range of sources, such as books, articles, websites, and more. During pre-training, the model learns to predict the next word in a sentence, which enables it to grasp the intricacies of language, including syntax, grammar, and context.

Transformer Architecture: ChatGPT utilizes a transformer architecture, which is a type of deep learning model particularly suited for processing sequential data like text. Transformers consist of multiple layers of self-attention mechanisms and feedforward neural networks. These components allow the model to effectively capture contextual information from the input text, which is crucial for generating coherent and contextually relevant responses.

Fine-tuning: Following pre-training, ChatGPT undergoes a fine-tuning process on specific datasets with the assistance of human reviewers who adhere to guidelines provided by OpenAI. This fine-tuning phase is essential for customizing the model's behavior and making it more useful, safe, and controlled for specific tasks. Reviewers provide feedback and rate the model's outputs, enabling continuous refinement and improvement.

Inference: Once fully trained and fine-tuned, ChatGPT is ready to generate text responses in real-time. When presented with an input prompt or question, the model processes the input and leverages the knowledge and patterns it has acquired during pre-training and fine-tuning to produce coherent and contextually appropriate responses.

Prompt-based Interaction: Users interact with ChatGPT by presenting prompts or questions. These prompts serve as the model's cues for generating responses. ChatGPT processes these prompts and produces text-based replies based on the input provided.

Language Generation: The model generates text responses by predicting the most likely next word or phrase in a sequence, considering the context of the input. It does this by sampling from a distribution of likely words, a process that enhances the naturalness of the generated text and makes it sound more human-like.

Limitations of ChatGPT

Misunderstanding and Misinterpretation: ChatGPT-3.5 may struggle to understand the subtleties of human language, leading to potential misinterpretation of user intent, tone, or humor. This can result in generating inappropriate or irrelevant responses, which could be problematic, especially in sensitive or important conversations.

Outdated Knowledge: The model's training data only goes up until September 2021, limiting its ability to provide up-to-date information. This can lead to inaccuracies and outdated responses when users inquire about recent events or developments.

Algorithmic Bias: These models are trained on vast amounts of data, primarily from the internet, which can lead to a bias in the data. This bias can pose a significant threat to the integrity of science and other fields where these models are used.

Task Management and Multitasking: The model may struggle to handle multiple tasks at once, potentially leading to confusion, mixed responses, or incorrect answers. This limitation can reduce its overall effectiveness and reliability, especially in situations that require multitasking.

Ethical implications

• Manipulation and Misinformation: There are concerns about GPT-3’s potential intentional misuse for manipulation and unintentional harm caused by bias. The model could be used to spread false information, which is a significant ethical concern.

• Plagiarism: In academic settings, there are concerns such as plagiarism when using GPT-3 for writing. Since the model generates text based on the data it was trained on, it could potentially produce content that is too similar to existing sources.

• Ecological Issues: The use of large models like GPT-3 also raises ecological concerns due to the significant computational resources required for training and running these models.

• Autonomy of Human Actors: There is a debate about the degree of influence imparted by technological determinism and “contextual” perspectives on the debate around GPT-3 and its potential ethical harms of manipulation and bias.

More guides on AI:

Visit my GitHub page for more guides.